“Extended Reality” (XR) systems are advanced interactive systems such as Virtual Reality (VR) and Augmented Reality (AR) systems. They have emerged in various domains, ranging from entertainment, cultural heritage, to combat training and mission critical applications. The development and authoring of such systems is an iterative process that also includes quality assurance to make sure that the resulting systems are correct and delivering a high quality user experience.

IV4XR

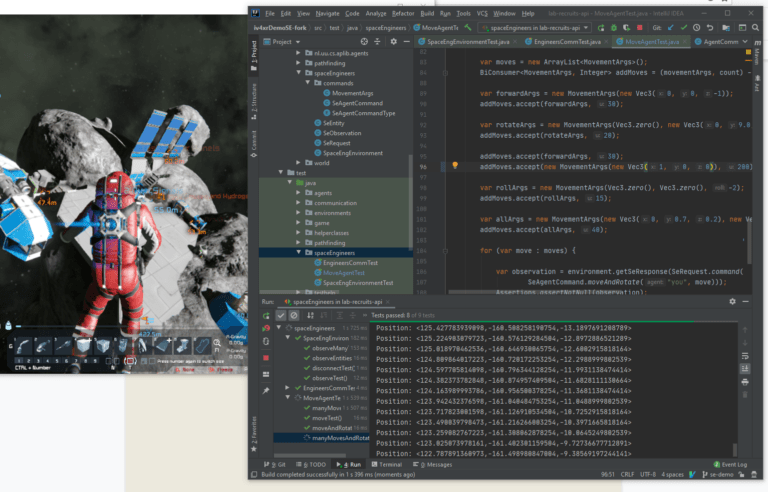

Use Case: Space Engineers

Space Engineers (SE) is a complex open world game with volumetric physics developed by Keen Software House which is a sister company of GoodAI (GA). In a world with volumetric physics, objects behave like real physical objects with mass, inertia and velocity, allowing a more realistic simulation of the physics of the real world. SE is a very popular game, amassing currently millions of players. In SE players can build any object from blocks and the construction works just like if it would in the real world in a similar fashion as in LEGO Technic. SE is also highly “moddable” – which means that we are giving players the tools to alter the game and its functions in any direction, adding new blocks, changing visuals, etc.

In this pilot, GA is interested in developing intelligent test agents to test SE. The current V&V process relies on manual testing, assisted with only a simple record and replay tool. The tool is used to record human testers while playing short 1-2 minute test sessions in SE. For each, the tool records all keypresses and mouse movements and clicks by a tracked player. It also makes screenshots. When something is changed in the code of SE, the recording can be replayed as autonomous tests. The tool compares screenshots made during recording and those made during replay and uses this information to signal regression errors. During the test, the game is configured to behave “almost” deterministic, so the above approach does work. While this helps in discovering regression errors, new test cases still need to be manually created when new functionalities are added to the software in order to verify the correctness of these new functionalities. Of course, once recorded, the new tests can afterwards be replayed, but the manual part is costly and difficult to scale up.

Web IV4XR

Intelligent Verification/Validation for Extended Reality Based Systems

Join GoodAI

Are you keen on making a meaningful impact? Interested in joining the GoodAI team?