We are developing Long-Term Memory (LTM) systems to expand LLM agents' context window, enabling continual learning from interactions and environmental dynamics. Our goal is to create agents capable of lifelong learning, using every new experience to enhance future performance.

As part of our research efforts in the area of continual learning, we are open-sourcing a benchmark for testing agents’ ability to perform tasks involving the advanced use of the memory over very long conversations. Among others, we evaluate the agent’s performance on tasks that require dynamic upkeep of memories or integration of information over long periods of time.

We are open-sourcing:

- The living GoodAI LTM Benchmark.

- Our LTM agents.

- Our experiment data and results.

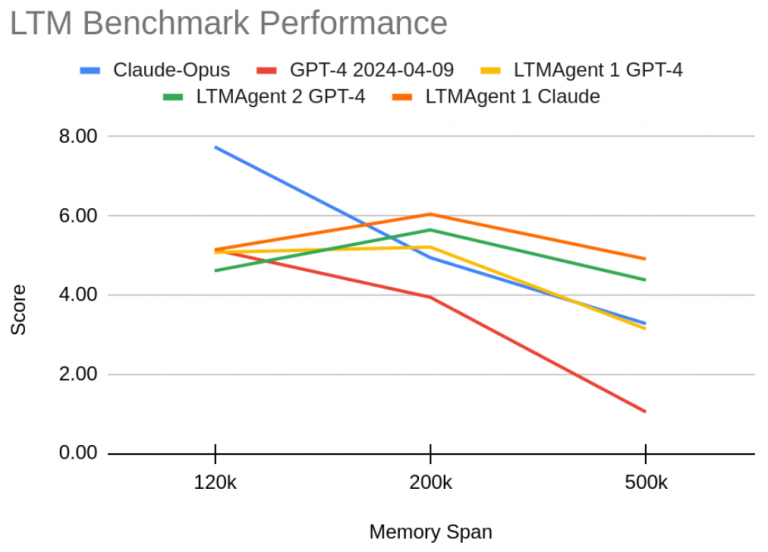

We show that the availability of information is a necessary, but not sufficient condition for solving these tasks. In our initial benchmark, our conversational LTM agents with 8k context are comparable to long context GPT-4-1106 with 128k tokens. In a larger benchmark with 10 times higher memory requirements, our conversational LTM agents with 8k context achieve performance which is 13% better than GPT-4-turbo with a context size of 128,000 tokens for less than 16% of the cost.

We believe that our results help illustrate the usefulness of the LTM as a tool, which not only extends the context window of LLMs, but also makes it dynamic and helps the LLM reason about its past knowledge and therefore better integrate the information in its conversation history. We expect that LTM will ultimately allow agents to learn better and make them capable of life-long learning.