Olga Afanasjeva, Jan Feyereisl, Marek Havrda, Martin Holec, Seán Ó hÉigeartaigh, Martin Poliak

Summary

- Promising strides are being made in research towards artificial general intelligence systems. This progress might lead to an apparent winner-takes-all race for AGI.

- Concerns have been raised that such a race could create incentives to skimp on safety and to defy established agreements between key players.

- The AI Roadmap Institute held a workshop to begin interdisciplinary discussion on how to avoid scenarios where such dangerous race could occur.

- The focus was on scoping the problem, defining relevant actors, and visualizing possible scenarios of the AI race through example roadmaps.

- The workshop was the first step in preparation for the AI Race Avoidance round of the General AI Challenge that aims to tackle this difficult problem via citizen science and promote AI safety research beyond the boundaries of the small AI safety community.

Scoping the problem

With the advent of artificial intelligence (AI) in most areas of our lives, the stakes are increasingly becoming higher at every level. Investments into companies developing machine intelligence applications are reaching astronomical amounts. Despite the rather narrow focus of most existing AI technologies, the extreme competition is real and it directly impacts the distribution of researchers among research institutions and private enterprises.

With the goal of artificial general intelligence (AGI) in sight, the competition on many fronts will become acute with potentially severe consequences regarding the safety of AGI.

The first general AI system will be disruptive and transformative. First-mover advantage will be decisive in determining the winner of the race due to the expected exponential growth in capabilities of the system and subsequent difficulty of other parties to catch up. There is a chance that lengthy and tedious AI safety work ceases being a priority when the race is on. The risk of AI-related disaster increases when developers do not devote the attention and resources to safety of such a powerful system [1].

Once this Pandora’s box is opened, it will be hard to close. We have to act before this happens and hence the question we would like to address is:

How can we avoid general AI research becoming a race between researchers, developers and companies, where AI safety gets neglected in favor of faster deployment of powerful, but unsafe general AI?

Motivation for this post

As a community of AI developers, we should strive to avoid the AI race. Some work has been done on this topic in the past [1,2,3,4,5], but the problem is largely unsolved. We need to focus the efforts of the community to tackle this issue and avoid a potentially catastrophic scenario in which developers race towards the first general AI system while sacrificing safety of humankind and their own.

This post marks “step 0” that we have taken to tackle the issue. It summarizes the outcomes of a workshop held by the AI Roadmap Institute on 29th May 2017, at GoodAI head office in Prague, with the participation of Seán Ó hÉigeartaigh (CSER), Marek Havrda, Olga Afanasjeva, Martin Poliak (GoodAI), Martin Holec (KISK MUNI) and Jan Feyereisl (GoodAI & AI Roadmap Institute). We focused on scoping the problem, defining relevant actors, and visualizing possible scenarios of the AI race.

This workshop is the first in a series held by the AI Roadmap Institute in preparation for the AI Race Avoidance round of the General AI Challenge (described at the bottom of this page and planned to launch in late 2017). Posing the AI race avoidance problem as a worldwide challenge is a way to encourage the community to focus on solving this problem, explore this issue further and ignite interest in AI safety research.

By publishing the outcomes of this and the future workshops, and launching the challenge focused on AI race avoidance, we would like to promote AI safety research beyond the boundaries of the small AI safety community.

The issue should be subject to a wider public discourse, and should benefit from cross-disciplinary work of behavioral economists, psychologists, sociologists, policy makers, game theorists, security experts, and many more. We believe that transparency is essential part of solving many of the world’s direst problems and the AI race is no exception. This in turn may reduce regulation over-shooting and unreasonable political control that could hinder AI research.

Proposed line of thinking about the AI race: Example Roadmaps

One approach for starting to tackle the issue of AI race avoidance, and laying down the foundations for thorough discussion, is the creation of concrete roadmaps that outline possible scenarios of the future. Scenarios can be then compared, and mitigation strategies for negative futures can be suggested.

We used two simple methodologies for creating example roadmaps:

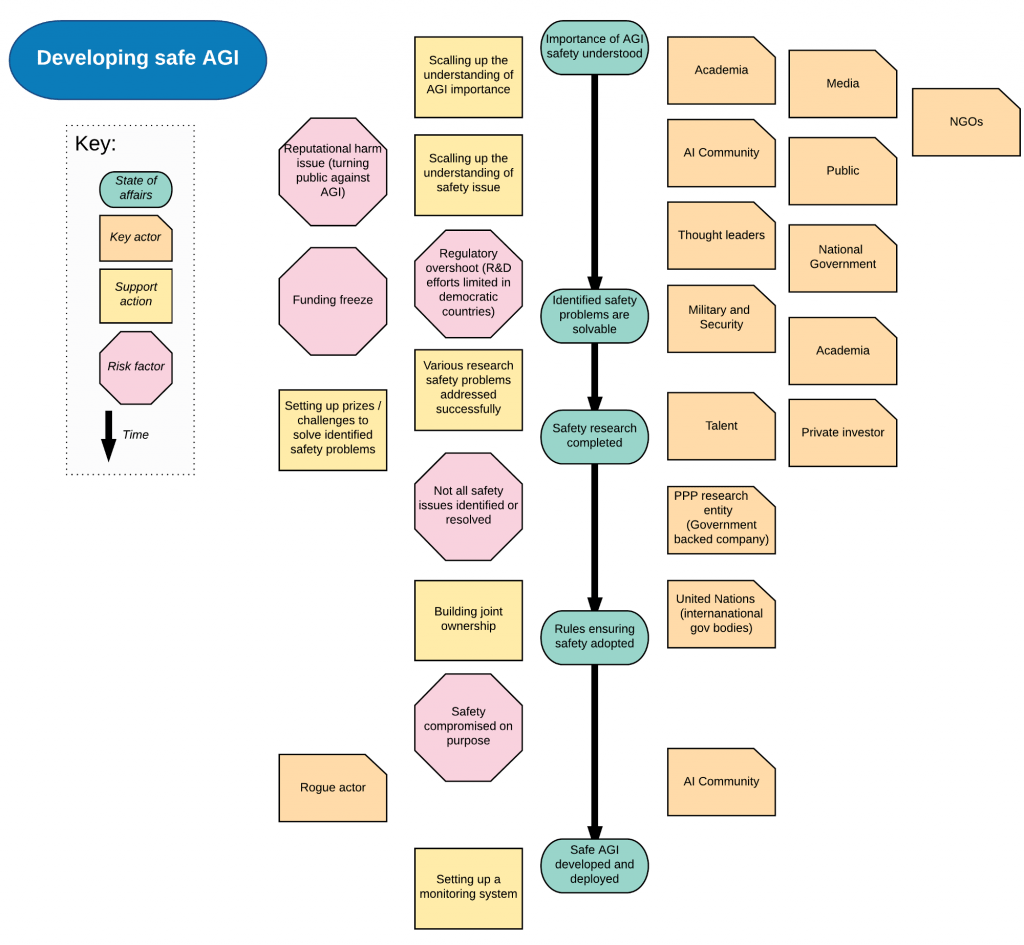

Methodology 1: a simple linear development of affairs is depicted by various shapes and colors representing the following notions: state of affairs, key actor, action, risk factor. The notions are grouped around each state of affairs in order to illustrate principal relevant actors, actions and risk factors.

Figure 1: This example roadmap depicts the safety issue before an AGI is developed. It is meant to be read top-down: arrow connecting ‘state-of-affairs’ depicts time. Entities representing actors, actions and factors, are placed around the time arrow, closest to states of affairs that they influence the most [full-size].

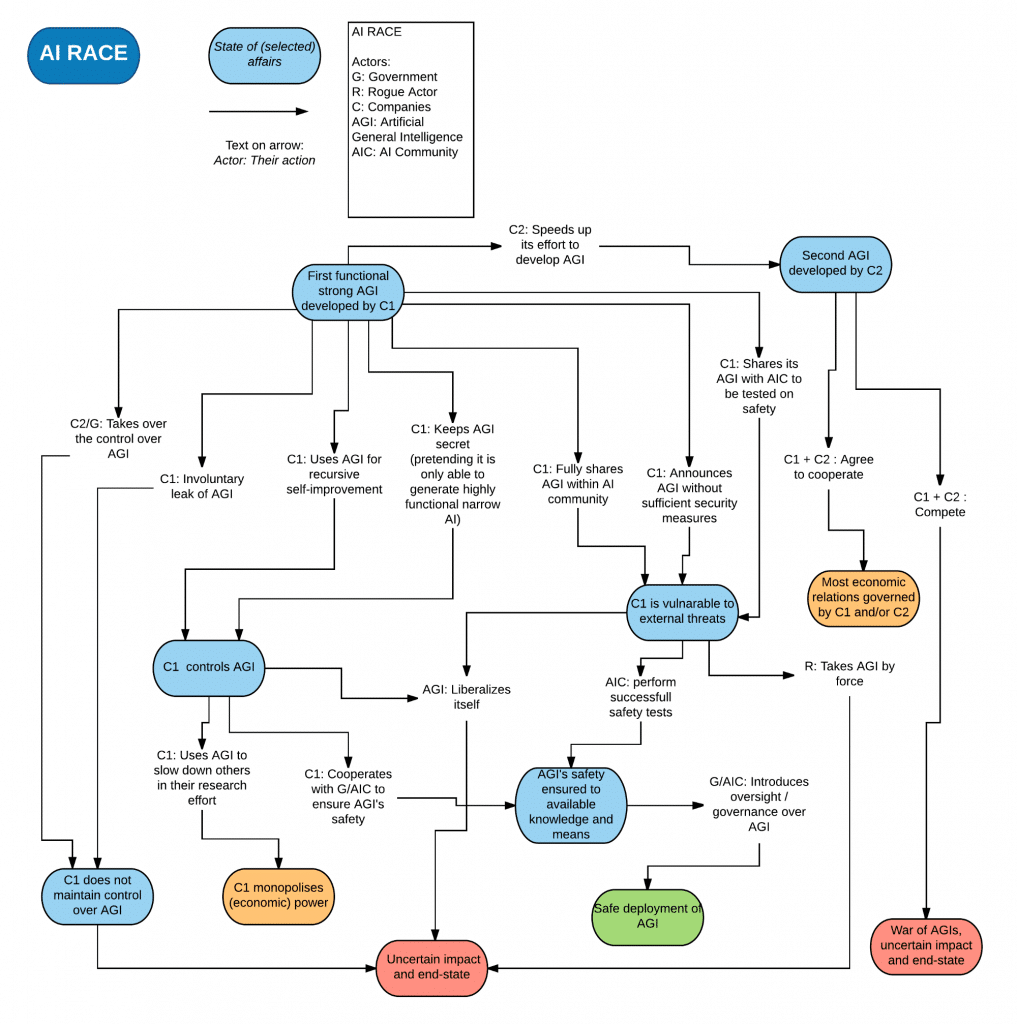

Methodology 2: each node in a roadmap represents a state, and each link, or transition, represents a decision-driven action by one of the main actors (such as a company/AI developer, government, rogue actor, etc.)

Figure 2: The example roadmap above visualises various scenarios from the point when the very first hypothetical company (C1) develops an AGI system. The roadmap primarily focuses on the dilemmas of C1. Furthermore, the roadmap visualises possible decisions made by key identified actors in various States of affairs, in order to depict potential roads to various outcomes. Traffic-light color coding is used to visualize the potential outcomes. Our aim was not to present all the possible scenarios, but a few vivid examples out of the vast spectrum of probable futures [full-size].

During the workshop, a number of important issues were raised. For example, the need to distinguish different time-scales for which roadmaps can be created, and different viewpoints (good/bad scenario, different actor viewpoints, etc.)

Timescale issue

Roadmapping is frequently a subjective endeavor and hence multiple approaches towards building roadmaps exist. One of the first issues that was encountered during the workshop was with respect to time variance. A roadmap created with near-term milestones in mind will be significantly different from long-term roadmaps, nevertheless both timelines are interdependent. Rather than taking an explicit view on short-/long-term roadmaps, it might be beneficial considering these probabilistically. For example, what roadmap can be built, if there was a 25% chance of general AI being developed within the next 15 years and 75% chance of achieving this goal in 15–400 years?

Considering the AI race at different temporal scales is likely to bring about different aspects which should be focused on. For instance, each actor might anticipate different speed of reaching the first general AI system. This can have a significant impact on the creation of a roadmap and needs to be incorporated in a meaningful and robust way. For example, the Boy Who Cried Wolf situation can decrease the established trust between actors and weaken ties between developers, safety researchers, and investors. This in turn could result in the decrease of belief in developing the first general AI system at the appropriate time. For example, a low belief of fast AGI arrival could result in miscalculating the risks of unsafe AGI deployment by a rogue actor.

Furthermore, two apparent time “chunks” have been identified that also result in significantly different problems that need to be solved. Pre- and Post-AGI era, i.e. before the first general AI is developed, compared to the scenario after someone is in possession of such a technology.

In the workshop, the discussion focused primarily on the pre-AGI era as the AI race avoidance should be a preventative, rather than a curative effort. The first example roadmap (figure 1) presented here covers the pre-AGI era, while the second roadmap (figure 2), created by GoodAI prior to the workshop, focuses on the time around AGI creation.

Viewpoint issue

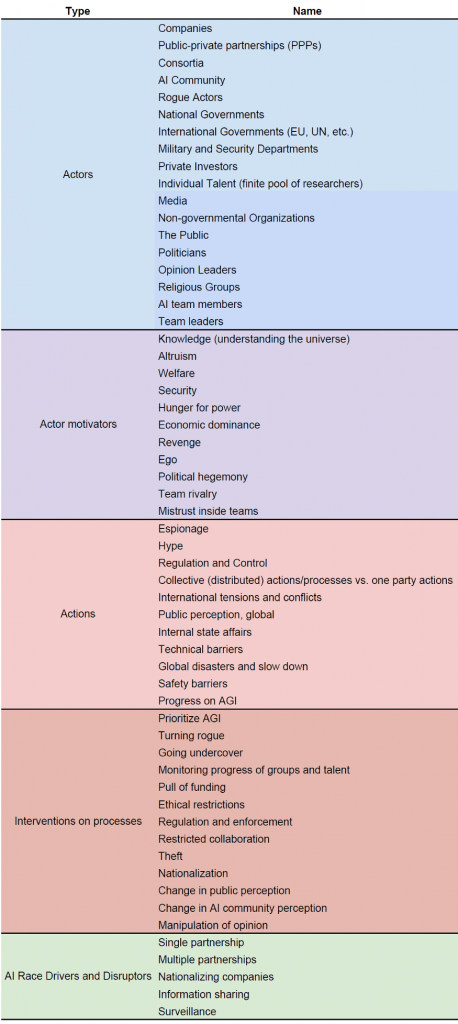

We have identified an extensive (but not exhaustive) list of actors that might participate in the AI race, actions taken by them and by others, as well as the environment in which the race takes place, and states in between which the entire process transitions. Table 1 outlines the identified constituents. Roadmapping the same problem from various viewpoints can help reveal new scenarios and risks.

Modelling and investigating decision dilemmas of various actors frequently led to the fact that cooperation could proliferate applications of AI safety measures and lessen the severity of race dynamics.

Cooperation issue

Cooperation among the many actors, and spirit of trust and cooperation in general, is likely to decrease the race dynamics in the overall system. Starting with a low-stake cooperation among different actors, such as talent co-development or collaboration among safety researchers and industry, should allow for incremental building of trust and better understanding of faced issues.

Active cooperation between safety experts and AI industry leaders, including cooperation between different AI developing companies on the questions of AI safety, for example, is likely to result in closer ties and in a positive information propagation up the chain, leading all the way to regulatory levels. Hands-on approach to safety research with working prototypes is likely to yield better results than theoretical-only argumentation.

One area that needs further investigation in this regard are forms of cooperation that might seem intuitive, but might rather reduce the safety of AI development [1].

Finding incentives to avoid the AI race

It is natural that any sensible developer would want to prevent their AI system from causing harm to its creator and humanity, whether it is a narrow AI or a general AI system. In case of a malignant actor, there is presumably a motivation at least not to harm themselves.

When considering various incentives for safety-focused development, we need to find a robust incentive (or a combination of such) that would push even unknown actors towards beneficial A(G)I, or at least an A(G)I that can be controlled [6].

Tying timescale and cooperation issues together

In order to prevent a negative scenario from happening, it should be beneficial to tie the different time-horizons (anticipated speed of AGI’s arrival) and cooperation together. Concrete problems in AI safety (interpretability, bias-avoidance, etc.) [7] are examples of practically relevant issues that need to be dealt with immediately and collectively. At the same time, the very same issues are related to the presumably longer horizon of AGI development. Pointing out such concerns can promote AI safety cooperation between various developers irrespective of their predicted horizon of AGI creation.

Forms of cooperation that maximize AI safety practice

Encouraging the AI community to discuss and attempt to solve issues such as AI race is necessary, however it might not be sufficient. We need to find better and stronger incentives to involve actors from a wider spectrum that go beyond actors traditionally associated with developing AI systems. Cooperation can be fostered through many scenarios, such as:

- AI safety research is done openly and transparently,

- Access to safety research is free and anonymous: anyone can be assisted and can draw upon the knowledge base without the need to disclose themselves or what they are working on, and without fear of losing a competitive edge (a kind of “AI safety helpline”),

- Alliances are inclusive towards new members,

- New members are allowed and encouraged to enter global cooperation programs and alliances gradually, which should foster robust trust building and minimize burden on all parties involved. An example of gradual inclusion in an alliance or a cooperation program is to start cooperating on low-stake issues from economic competition point of view, as noted above.

Closing remarks – continuing the work on AI race avoidance

In this post we have outlined our first steps on tackling the AI race. We welcome you to join in the discussion and help us to gradually come up with ways how to minimize the danger of converging to a state in which this could be an issue.

The AI Roadmap Institute will continue to work on AI race roadmapping, identifying further actors, recognizing yet unseen perspectives, time scales and horizons, and searching for risk mitigation scenarios. We will continue to organize workshops to discuss these ideas and publish roadmaps that we create. Eventually we will help build and launch the AI Race Avoidance round of the General AI Challenge. Our aim is to engage the wider research community and to provide it with a sound background to maximize the possibility of solving this difficult problem.

Stay tuned, or even better, join in now.

About the General AI Challenge and its AI Race Avoidance round

The General AI Challenge (Challenge for short) is a citizen science project organized by general artificial intelligence R&D company GoodAI. GoodAI provided a $5mil fund to be given out in prizes throughout various rounds of the multi-year Challenge. The goal of the Challenge is to incentivize talent to tackle crucial research problems in human-level AI development and to speed up the search for safe and beneficial general artificial intelligence.

The independent AI Roadmap Institute, founded by GoodAI, collaborates with a number of other organizations and researchers on various A(G)I related issues including AI safety. The Institute’s mission is to accelerate the search for safe human-level artificial intelligence by encouraging, studying, mapping and comparing roadmaps towards this goal. The AI Roadmap Institute is currently helping to define the second round of the Challenge, AI Race Avoidance, dealing with the question of AI race avoidance (set to launch in late 2017).

Participants of the second round of the Challenge will deliver analyses and/or solutions to the problem of AI race avoidance. Their submissions will be evaluated in a two-phase evaluation process: through a) expert acceptance and b) business acceptance. The winning submissions will receive monetary prizes, provided by GoodAI.

Expert acceptance

The expert jury prize will be awarded for an idea, concept, feasibility study, or preferably an actionable strategy that shows the most promise for ensuring safe development and avoiding rushed deployment of potentially unsafe A(G)I as a result of market and competition pressure.

Business acceptance

Industry leaders will be invited to evaluate top 10 submissions from the expert jury prize and possibly a few more submissions of their choice (these may include proposals which might have a potential for a significant breakthrough while lacking in feasibility criteria).

The business acceptance prize is a way to contribute to establishing a balance between the research and the business communities.

The proposals will be treated under an open licence and will be made public together with the names of their authors. Even in the absence of a “perfect” solution, the goal of this round of the General AI Challenge should be fulfilled by advancing the work on this topic and promoting interest in AI safety across disciplines.

References

[1] Armstrong, S., Bostrom, N., & Shulman, C. (2016). Racing to the precipice: a model of artificial intelligence development. AI & SOCIETY, 31(2), 201–206.

[2] Baum, S. D. (2016). On the promotion of safe and socially beneficial artificial intelligence. AI and Society, (2011), 1–9.

[3] Bostrom, N. (2017). Strategic Implications of Openness in AI Development. Global Policy, 8(2), 135–148.

[4] Geist, E. M. (2016). It’s already too late to stop the AI arms race — We must manage it instead. Bulletin of the Atomic Scientists, 72(5), 318–321.

[5] Conn, A. (2017). Can AI Remain Safe as Companies Race to Develop It?

[6] Orseau, L., & Armstrong, S. (2016). Safely Interruptible Agents.

[7] Amodei, D., Olah, C., Steinhardt, J., Christiano, P., Schulman, J., & Mané, D. (2016). Concrete Problems in AI Safety.