Scalable Environment for Artificial Intelligence and Cumulative Culture

Jakob Foerster's grant work proposes to build a scalable environment aimed at achieving turing-complete computation.

Read more

Jakob Foerster's grant work proposes to build a scalable environment aimed at achieving turing-complete computation.

Read more

Julian Togelius's new game-based benchmarks to measure and improve open-ended learning, task transfer, and generalization for game AI.

Read more

An overview of research findings from the 2022 ICLR workshop exploring the cumulative nature of culture across disciplines.

Read more

GoodAI grantee Sebastian Risi will conduct research to optimize neural cellular automata (NCA) to grow neural networks.

Read more

Developing "curious" AI agents to autonomously discover and learn a diversity of structures and skills in their environments.

Read moreEvolution doesn't produce specific solutions to specific environmental problems. It produces problem solving machines. Machines that can solve problems in other spaces.

Read more

Why are we studying social learning in multi-agent systems?

Read more

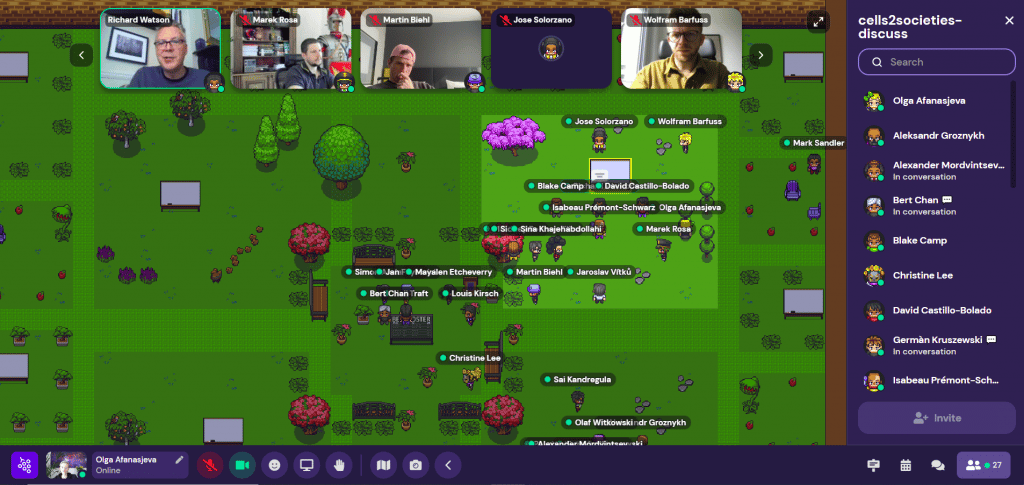

The 2022 workshop, “From Cells to Societies: Collective Learning Across Scales,” will examine natural systems to understand how collective interactions can contribute to new learning approaches in artificial learning systems.

Read more

Wendelin Böhmer investigates how restricted neural modules, similar to Badger agents, can learn generalizing sub-tasks and how a message passing architecture can reliably compose their output for out-of-distribution generalization.

Read more

Integrating GoodAI’s Badger architecture and principles, new grant recipients Rolando Estrada and Blake Camp aim to develop a continual semi-supervised learning system capable of joint supervised and unsupervised learning.

Read moreAre you keen on making a meaningful impact? Interested in joining the GoodAI team?