In our research, we distinguish between two types of Badger architecture: automatic and manual. Within Automatic Badger, experts gain functionality and specialization through meta-learning and end-to-end optimization in a top-down fashion. In Manual Badger, however, the goal is to find the right set of low-level rules that give rise to the desired behavior. Memetic Badger is a variation of Manual Badger where experts learn through the joint action of evolution and memetics.

Inspired by the cumulative nature of culture in human society, we seek to replicate the dynamics where the computation of multiple experts effectively distributes information with the goal of collectively solving a task. Badger experts should be able to communicate with each other in order to quickly adapt to new tasks while easily reusing past knowledge. Upon novel but similar tasks, experts should also be able to adapt by rewiring their society, and only in certain cases, would the addition of new experts be needed. Ways in which experts can learn are diverse, but always local, an aspect that ensures the linear scalability of the system.

Unlike the classical evolutionary scheme where genetic information flows vertically, memetics offers a paradigm where cultural information (knowledge) is transferred horizontally and takes place during an individuals’ lifetime and not via reproduction. Instead of suffering random mutations or death (problematic for architectures like Badger which conceive experts as units of computation and cannot afford to lose expert knowledge), meme variations are directional and influenced by preexisting knowledge.

A practical and quick-changing implementation

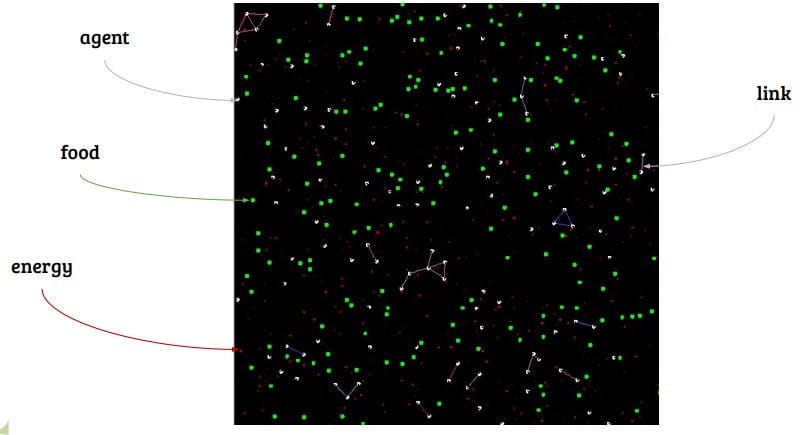

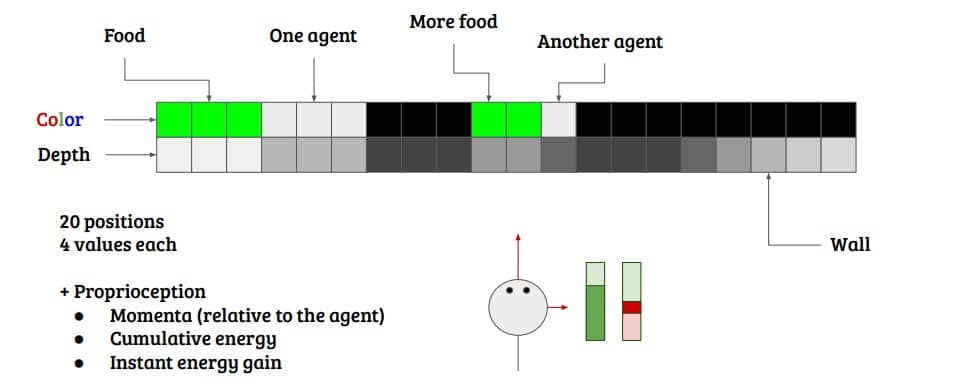

We have been using our own FlatWorld as a testbed to explore the underlying mechanisms of memetics and collective intelligence. Inspired by the Animal-AI environment, a competition that tested AI agents with cognition challenges in 3D scenarios, FlatWorld takes place in a 2D world. There is still food, agents, and more, but all the movement takes place in a plane and agent observations are unidimensional. With fast iteration in mind, it has been in constant change and has changed significantly since its conception in order to serve this purpose.

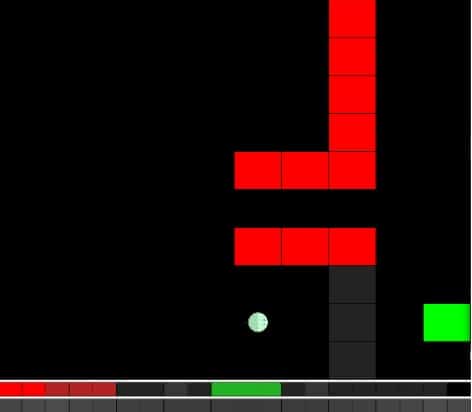

Original version of the FlatWorld environment. An agent is looking at a green food block through a transparent wall. In the lower side of the image, you can see what the agent sees (colors and depth). In order to reach the food, the agent must avoid the lava blocks and go through the passage. This task requires some degree of memory, to cope with occlusion and orientation.

The implementation relies on Memetic Badger and focuses on multi-agent setups where thousands of agents coexist and compete for food and survival. We have been using agents as a synonym of experts to simplify things, and rather than hand-craft challenges with different blocks, we have reduced the number of elements to a minimum (mainly agents and food). We have been experimenting with several mechanisms and configurations in order to foster the emergence of groups and collaboration within a society. Agents can form groups, which bring them advantages, such as the ability to share energy and communicate. They can also communicate with each other via an attention mechanism, through which they can filter out who they want to communicate with and establish computation circuits, akin to the production pipelines.

While they are moving around, forming groups and gathering food, memetics keep doing their thing. Every agent is governed by a set of a few memes (usually 5 to 10), which coordinate through different kinds of voting in order to decide which meme to rely on, what action to output, whether to form a group with another agent, etc. These memes are actually very small neural networks, with around 1k parameters each, and when two agents get close enough a meme transfer can happen. This cultural evolution through horizontal transfer is therefore taking place at almost every step, and the evolutionary selection only acts every now and then, when a group of memes fails in making their vessel (the agent) survive.

This is a form of group selection, the same selection force involved in the appearance of altruism and other complex group behaviors in nature, and has given us interesting results in the past. By essentially discarding entire groups of memes that fail to coordinate, group selection pushes memes to evolve in ways that favor cooperation with other memes.

So far, we have observed the emergence of very interesting behaviors in small and large groups of agents, and Flatworld has proven to be a great tool for quickly testing new hypotheses and making fast progress. We are constantly working towards finding the right low-level rules that lead to complex group-level behavior and quick adaptation, and we are really encouraged by our recent advances both in the understanding of the underlying mechanics and the scaling of experiments. We have recently spotted a couple of missing pieces that are key enablers of group coordination and higher-level cognition, so we hope to see more impactful results in the coming months.

Leave a comment